The max_bin parameter in XGBoost controls the maximum number of bins used to discretize continuous features. By default, XGBoost uses a maximum of 256 bins.

Reducing max_bin can speed up training and reduce memory usage, but it may impact the model’s performance.

This example demonstrates how to tune the max_bin hyperparameter using grid search with cross-validation to find the optimal value that balances speed and accuracy.

import xgboost as xgb

import numpy as np

from sklearn.datasets import make_classification

from sklearn.model_selection import GridSearchCV, StratifiedKFold

from sklearn.metrics import accuracy_score

# Create a synthetic dataset

X, y = make_classification(n_samples=10000, n_classes=5, n_features=20, n_informative=10, random_state=42)

# Configure cross-validation

cv = StratifiedKFold(n_splits=5, shuffle=True, random_state=42)

# Define hyperparameter grid

param_grid = {

'max_bin': [32, 64, 128, 256, 512]

}

# Set up XGBoost classifier

model = xgb.XGBClassifier(n_estimators=100, learning_rate=0.1, random_state=42)

# Perform grid search

grid_search = GridSearchCV(estimator=model, param_grid=param_grid, cv=cv, scoring='accuracy', n_jobs=-1, verbose=1)

grid_search.fit(X, y)

# Get results

print(f"Best max_bin: {grid_search.best_params_['max_bin']}")

print(f"Best CV accuracy: {grid_search.best_score_:.4f}")

# Plot max_bin vs. accuracy

import matplotlib.pyplot as plt

results = grid_search.cv_results_

plt.figure(figsize=(10, 6))

plt.plot(param_grid['max_bin'], results['mean_test_score'], marker='o', linestyle='-', color='b')

plt.fill_between(param_grid['max_bin'], results['mean_test_score'] - results['std_test_score'],

results['mean_test_score'] + results['std_test_score'], alpha=0.1, color='b')

plt.title('Max Bin vs. Accuracy')

plt.xlabel('Max Bin')

plt.ylabel('CV Average Accuracy')

plt.grid(True)

plt.show()

# Train a final model with the best max_bin value

best_max_bin = grid_search.best_params_['max_bin']

final_model = xgb.XGBClassifier(n_estimators=100, learning_rate=0.1, max_bin=best_max_bin, random_state=42)

final_model.fit(X, y)

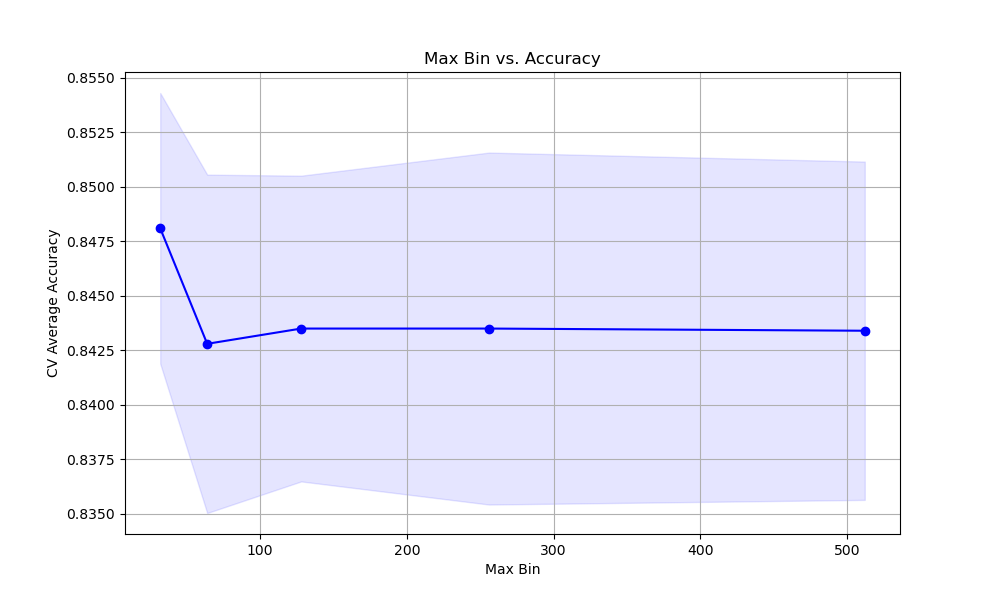

The resulting plot may look as follows:

In this example, we create a synthetic multi-class classification dataset using scikit-learn’s make_classification function. We set up a StratifiedKFold cross-validation object to ensure that the class distribution is preserved in each fold.

We define a hyperparameter grid param_grid that specifies the range of max_bin values we want to test. In this case, we consider values of 32, 64, 128, 256, and 512.

We create an instance of the XGBClassifier with some basic hyperparameters set, such as n_estimators and learning_rate. We then perform the grid search using GridSearchCV, providing the model, parameter grid, cross-validation object, scoring metric (accuracy), and the number of CPU cores to use for parallel computation.

After fitting the grid search object, we can access the best max_bin value and the corresponding best cross-validation accuracy using grid_search.best_params_ and grid_search.best_score_, respectively.

We plot the relationship between the max_bin values and the cross-validation average accuracy scores using matplotlib. We retrieve the results from grid_search.cv_results_ and plot the mean accuracy scores along with the standard deviation as error bars. This visualization helps us understand how the choice of max_bin affects the model’s performance.

Finally, we train a final model using the best max_bin value found during the grid search. This model can be used for making predictions on new data.

By tuning the max_bin hyperparameter using grid search with cross-validation, we can find the optimal value that balances training speed, memory usage, and model performance. This helps us strike the right balance between computational efficiency and accuracy.